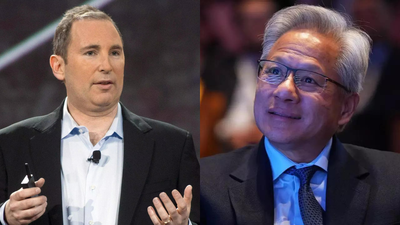

Amazon CEO Andy Jassy may have sent a “message” related to artificial intelligence chips to Nvidia CEO Jensen Huang. In his annual letter to shareholders, Jassy wrote that Amazon’s “chips business is on fire.” He added that “There’s so much demand for our chips that it’s quite possible we’ll sell racks of them to third parties in the future.” These statements from Amazon’s chief executive signalled a shift in the AI hardware landscape, outlining growing competition with Nvidia led by Jensen Huang. While reaffirming Amazon’s partnership with Nvidia, Jassy pointed to rising demand for lower-cost, efficient alternatives, highlighting the company’s in-house chips. He said Amazon’s chips business, including Graviton and Trainium, has crossed a $20 billion annual revenue run rate and continues to grow. Jassy noted that demand remains strong, with a significant subscription rate for newer Trainium versions, indicating a gradual shift in the powering of AI workloads across cloud platforms.Apart from this, Jassy noted that Amazon’s cloud business, Amazon Web Services (AWS), recorded an AI revenue run rate of more than $15 billion in the first quarter of 2026, marking its first disclosure of direct financial returns from its AI efforts. He said the numbers are also “ascending rapidly” and noted that the broader cloud business could have grown faster if not for capacity constraints currently affecting the tech industry.Read Amazon CEO Andy Jassy’s annual shareholder letter ‘message’ for Nvidia CEO Jensen HuangIn his annual shareholder letter, Jassy wrote: “Our chips business is on fire, changes the economics for AWS, and will be much larger than most think. Virtually all AI thus far has been done on NVIDIA chips, but a new shift has started. We have a strong partnership with NVIDIA, will always have customers who choose to run NVIDIA, and we will continue to make AWS the best place to run NVIDIA. However, customers want better price-performance. We’ve seen this movie before. In the CPU space, virtually all of the workloads ran on Intel chips until we invented Graviton in 2018. Graviton, which has up to 40% better price-performance than other x86 processors, is now used expansively by 98% of the top 1,000 EC2 customers. The same story arc is unfolding in AI. Our second version of our custom AI silicon (Trainium2) had about 30% better price-performance than comparable GPUs, and has largely sold out. Trainium3, which just started shipping at the start of 2026 and is 30-40% more price-performant than Trainium2, is nearly fully-subscribed. A significant chunk of Trainium4, which is still about 18 months from broad availability, has already been reserved. And, Amazon Bedrock, AWS’s primary (and very fast-growing) inference service, runs most of its inference on Trainium. Demand for Trainium is booming.Having our own hotly demanded AI chip opens up many possibilities, but perhaps none larger than the ability to lower costs for customers and secure better economics for AWS. At scale, we expect Trainium will save us tens of billions of capex dollars per year, and provide several hundred basis points of operating margin advantage versus relying on others’ chips for inference.Our annual revenue run rate for our chips business (inclusive of Graviton, Trainium, and Nitro—our EC2 NIC) is now over $20 billion, and growing triple digit percentages YoY. To dimensionalize this versus other chips companies, that run rate is somewhat understated by our currently only monetizing our chips through EC2. If our chips business was a stand-alone business, and sold chips produced this year to AWS and other third parties (as other leading chips companies do), our annual run rate would be ~$50 billion. There’s so much demand for our chips that it’s quite possible we’ll sell racks of them to third parties in the future.”