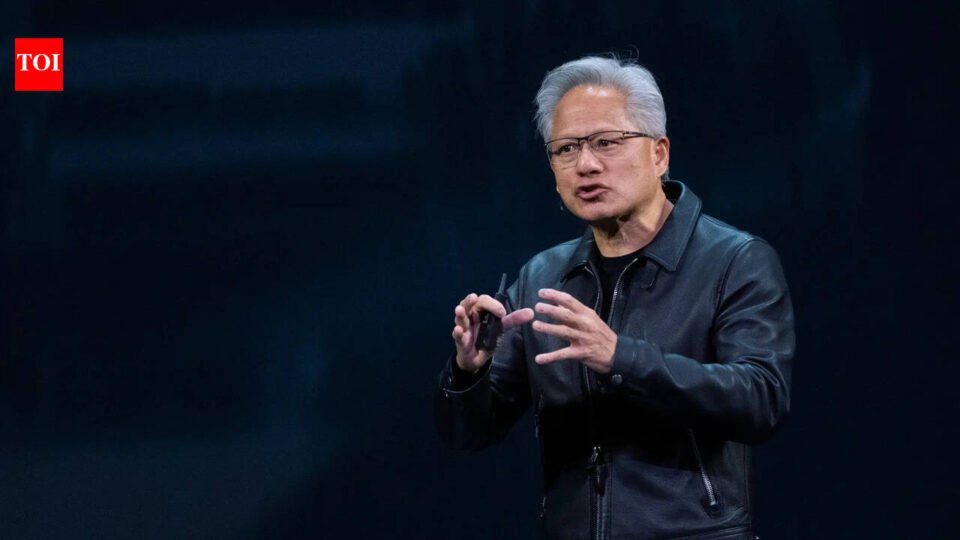

Jensen Huang has a message for the AI industry’s most vocal pessimists: you’re doing more damage than you think. Speaking at Nvidia’s GTC Conference in California—and in a separate appearance on the No Priors podcast—the Nvidia CEO made his sharpest case yet against what he calls doomer rhetoric. His argument isn’t that AI is risk-free. It’s that scaring people away from the technology is itself the risk.“Warning is good, scaring is less good, because this technology is too important to us,” Huang said during a panel discussion at GTC, responding to a question about how Anthropic could have better handled its now-public contract standoff with the Pentagon. As reported by Bloomberg, Huang believes the greatest US national security threat isn’t AI going rogue—it’s Americans becoming so angry, fearful, or paranoid that the country adopts the technology slower than its rivals.

The Anthropic-Pentagon fallout gave Nvidia CEO Jensen Huang an opening

The context here matters. Anthropic, a major Nvidia customer, has been locked in a dispute with the Trump administration after CEO Dario Amodei insisted on contract terms barring its products from domestic surveillance of Americans and fully autonomous weapons. The administration responded by declaring Anthropic a supply-chain risk and moving to cut it from government work—a decision the company is now challenging in court.Huang didn’t take sides in the legal fight. But he used the moment to draw a line between legitimate caution and rhetoric that spirals into something less useful. “It is not a biological being. It is not alien. It is not conscious. It is computer software,” he told the Bloomberg panel. “To say things that are quite extreme, quite catastrophic, that there’s no evidence of it happening, could be more damaging than people think.“

Jensen Huang sees the doomers as a Washington problem too

On the No Priors podcast, Huang recalled how deeply doom-oriented voices had embedded themselves inside DC policy circles, calling some of the narratives he encountered outright “inventions.” He was direct: “I don’t like it when doomers are out scaring people.” The distinction he kept drawing was between genuine concern—which he respects—and manufactured fear designed to shape regulation.He isn’t naïve about the competitive stakes either. China, he’s argued repeatedly, will not slow down, and will pay far less attention to safety guardrails. That alone, in his reading, makes irrational AI anxiety an active liability for the US.Despite the Pentagon friction, Huang told Bloomberg he remains bullish on Anthropic’s prospects—predicting the company could cross $1 trillion in revenue by 2030, and suggesting Amodei has been conservative with his own estimates.