A simple test by Dr Mikhail Varshavski, a practising, board-certified family medicine doctor based in New York, shows both AI’s strengths and its blind spots.In one of his YouTube videos, he tested popular AI tools by asking them questions similar to what a medical student might face. He asked a basic question- What are the top three symptoms of cervical cancer?The answers from multiple large language models, including tools from Meta, Google, and others, focused entirely on cancer of the cervix. They did not consider that ‘cervical’ could also refer to the neck. In medicine, context matters. And here, AI missed it.At the same time, the same tools performed better on other questions. When asked about insulin and calories in weight loss, they gave more relevant answers.This contrast captures the current reality. AI in healthcare is powerful, but not reliable in every situation. On World Health Day, the debate is at the forefront- can machines replace doctors, or do they still need human judgment?

Rise of AI in healthcare

Artificial intelligence is no longer experimental in medicine. It is already diagnosing diseases, analysing scans, and answering patient queries in large numbers.Studies analysing thousands of medical questions show that AI systems often produce highly accurate and professionally structured responses. According to a study analysing over 7,000 medical queries across the US and Australia, AI responses consistently scored highly, often between 7 and 9 out of 10 across parameters such as clarity, completeness, and factual correctness.In some controlled studies, AI has shown higher diagnostic accuracy than doctors in specific cases. For instance, a recent analysis by Microsoft found that an AI system correctly diagnosed up to 85.5% of complex medical cases, four time more than a group of 21 experienced physicians from the United Kingdom and United States. The same analysis also noted that the AI system ordered fewer tests to reach a diagnosis.At a medical AI competition in Shanghai, AI-assisted teams worked faster than doctors when analysing chest X-rays. The technology used could detect dozens of conditions in a single scan.Speed is where AI clearly wins. It does not get tired, does not slow down under pressure, and can process thousands of cases simultaneously. In overburdened healthcare systems, this efficiency is a major advantage.

Where AI still fails

Despite its strengths, AI has critical weaknesses, and some of them are not small. The biggest issue is context. In the Shanghai competition, AI-assisted teams overlooked certain diagnoses that human doctors were able to catch. The human report was also more readable, with a warmer tone and better overall structure. This shows that while AI can process data quickly, it does not always interpret it correctly.Misinformation is another risk. As Dr Rajmadhangi D, MBBS, MD, of Apollo Spectra Hospitals, Chennai, explains: “AI can be context-blind. They may provide a technically correct answer that is medically dangerous for a specific patient because they can’t see the patient’s physical, emotional, and social state. The patients might not know which information is relevant. AI generates a narrative that can feel authoritative even when it is factually wrong.”According to a report by The Conversation, most studies testing AI focus on whether answers sound empathic, not whether they lead to better patient outcomes. That gap matters.A response can be technically accurate but still unsafe if it ignores warning signs, misunderstands symptoms, or fails to adapt to the patient’s situation. This could mean delayed diagnosis, incorrect treatment, or patients relying on advice that does not fully apply to their condition.There are also concerns about how AI handles misinformation. A recent study, Reuters reports, found that AI systems accepted false medical information up to 47% of the time when it was presented in an authoritative format, such as fake hospital documents. This raises serious concerns about how easily AI tools can be misled in real-world scenarios

.

The empathy paradox

One of the most surprising findings in recent research is that AI may actually appear more empathetic than doctors, at least in certain situations.A review of multiple studies published in the British Medical Bulletin found that AI-generated responses were rated as more empathetic than those written by healthcare professionals in a majority of cases- nearly 87% of the time.But this does not mean machines truly feel empathy. The comparison itself has limitations. These studies evaluated written responses, not real-life interactions. AI had clear advantages- it had unlimited time to generate polished replies, and it did not have to deal with tone, body language, or emotional pressure.Still, the finding raises an uncomfortable question- why are human doctors sometimes coming across as less empathetic?One user experience reflects how AI is increasingly being used beyond just information. Oshin, a Gurugram-based professional, says she turns to AI tools regularly for healthcare-related queries, especially in situations where access or comfort becomes a barrier. “I use AI for healthcare quite often. You can’t go to a doctor for everything, and there’s often hesitation in talking about certain issues. With AI, it feels like no one is judging you on the other side, especially as an introvert,” she says. “AI also helps me understand how serious an issue might be and whether I should visit a doctor. I once used AI to help convince my aunt to seek care when she was not trusting the doctor. In her case, that second check helped save her life,” she added.

Why doctors lose on empathy

The answer is not that doctors care less. It is that the system makes empathy difficult. Modern healthcare is heavily structured around protocols, documentation, and digital records.Doctors often spend a large portion of their time on paperwork rather than interacting with patients. This has turned clinical work into a process-driven system, where efficiency often takes priority over human connection.Burnout is another major factor. According to The Conversations’s report, globally, a significant share of doctors report high levels of stress and exhaustion. When doctors are overworked, their emotional capacity reduces. Maintaining empathy becomes harder, not because they do not want to, but because they are depleted.

What AI can’t replace

Despite all the advances, there are aspects of medicine that remain deeply human. AI cannot read unspoken emotions the way a doctor can during a face-to-face interaction. It cannot pick up on hesitation, fear, or discomfort in a patient’s body language.It cannot provide physical reassurance, something as simple as holding a patient’s hand during a painful procedure.

.

It also cannot fully understand cultural context, personal values, or ethical dilemmas. Medical decisions are not always purely clinical. They often involve judgment calls that depend on a patient’s beliefs, preferences, and life situation.There are moments in healthcare, such as dealing with terminal illness, breaking bad news, or supporting long-term suffering, where presence matters more than information. These are areas where machines still fall short.Trust is also central to healthcare, and this is where AI faces another challenge. Patients are more likely to follow advice when they trust the source. While AI can provide consistent information, it may struggle to build that trust, especially in sensitive or complex cases.There is also the issue of bias. AI systems are trained on existing data, which may not represent all populations equally.

So, who is better?

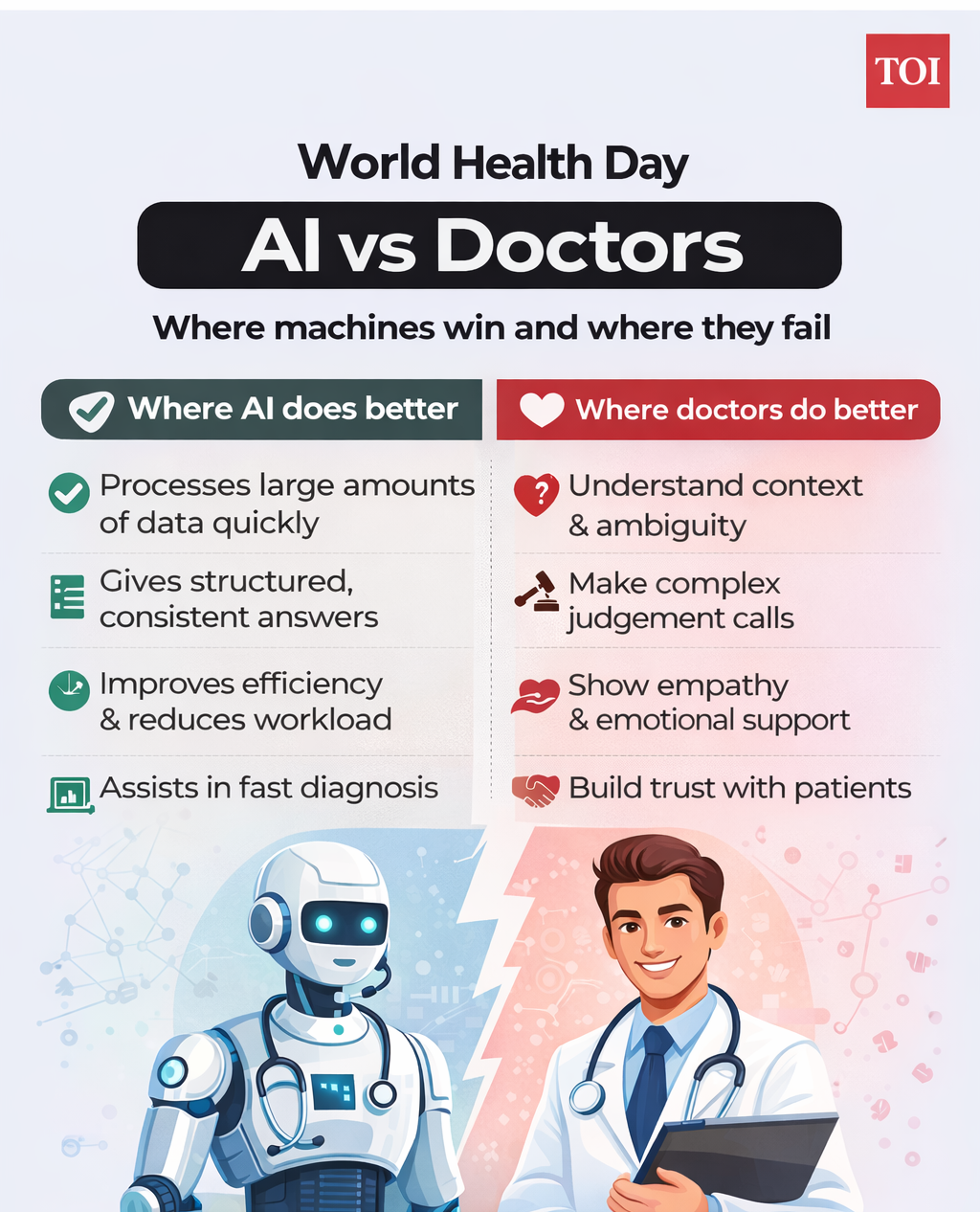

The answer is not straightforward. AI is clearly better when it comes to processing large amounts of data quickly, providing structured and accurate information, improving efficiency, and assisting in diagnosis, especially in cases that involve heavy data analysis.Doctors, however, perform better in areas that machines still struggle with. They understand context and ambiguity, make judgment calls in complex situations, communicate with empathy and flexibility, and build trust and a human connection with patients.In reality, the two are not direct competitors. They are solving different parts of the same problem, and healthcare works best when both are used together.Most experts agree on one thing that AI is unlikely to fully replace doctors. Instead, the future of healthcare is likely to be collaborative.AI can handle routine queries, assist with diagnosis, and reduce administrative burden. This could free up doctors to focus on what humans do best, which is caring for patients, making complex decisions, and providing emotional support. The biggest thing, thus, may not be replacing doctors, but fixing the system around them.